2025-07-03 15:56:00

Backups are important! Remember kids, Jesus saves (and makes incremental backups)!

Jokes aside, having a solid backup of everything your company or life depends on is crucial. Don't rely on your computers always working the way they should and don't assume that your cloud provider makes backups of all your data.

Q.E.D: Microsoft cloud services, like MS365, OneDrive or Azure may offer highly available storage. They may even offer some additional backup services at a fee. But if for some forsake reason things go really wrong, you'll lose it all.

My companies both use Microsoft MS365 and Azure Active Directory (aka Entra ID). For now they rely upon those, it's their sole productivity base.

I've got the MS365 backups covered (including e-mail, OneDrive, Teams etc) using Synology's wonderful Active Backup software. At the time I'd bought a Synology 19" rackable system, which includes the full license for Active Backup for MS365. How awesome is that?! Buy a NAS, get a full cloud backup included!

Yes, I've tested the backups and restoration: the Active Backup tooling is wonderful!

What it doesn't have, is backups for my IAM and RBAC user account administration, that is Entra ID. Unfortunately there's no Synology built-in solution for it either.

I did some investigation and there's quite a few companies offering SaaS solutions for Entra ID backups. Companies big and small, US and EU, affordable and expensive. Ironically, most of the smaller SaaS providers store your backups on Azure. :D

Of the SaaS providers, Keepit.com felt the best to me as they backup to their privately owned and built cloud environment in the EU. Ruud, from LazyAdmin, trusts Afi.ai which also looks decent.

Maybe I'm paranoid or overly careful, but it just doesn't sit right with me. I'm giving some third party full read-write access to my company's IAM and RBAC systems. If they get hacked, I'm fully pwned. I don't like it. Sure, all the big SaaS providers say they're trusted and used by big international companies! But... no I'm not doing it.

I chose to run my Entra ID backups on-prem, exactly like I'm running my MS365 backups on-prem. And there's one trusted company who offers that: Veeam.

Veeam Backup for Entra ID is offered both as SaaS solution, or as on-prem locally hosted software (deployment options here). Their messaging unfortunately is conflicting!

My experience:

There is only one thing remaining to complete my 3-2-1 backup strategy: off-site, offline storage, for both MS365 and Entra ID. And luckily my new Veeam backup server will help with that as well!

The SaaS services like Keepit.com, Afi.ai or Veeam's own service offer interesting pricing. While Keepit.com don't tell you their pricing, Afi want $36 per user per year and Veeam's ask is $14.10 pupy. Afi also includes MS365, which is of course a nice bargain.

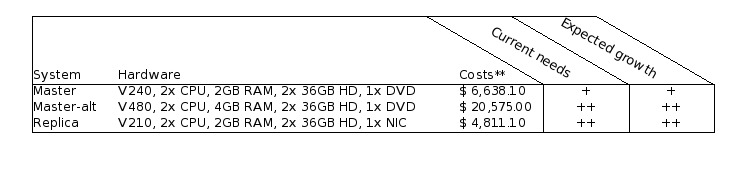

If like me you want to run things on-prem, other costs need to be factored.

For anything Entra ID with less than 100 users, Veeam itself is free thanks to their very generous Community Edition. Of course you do need to run it on something. I've opted for Windows Server 2025 in a 4-core VM, which will set me back €233 per year (excl VAT).

For hardware I'm using a Dell Optiplex, which I got for around €590 (excl VAT). The Optiplex will run a few other VMs and containers as well, which means I get to spread the costs a little bit.

Would Veeam or Afi SaaS be cheaper in the long run? Yes. $420 per three years SaaS, vs around €900 ($1060) per three years in on-prem hard- and software.

So why do it?

For the learning experience and for my paranoia. :)

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2025-04-23 18:00:00

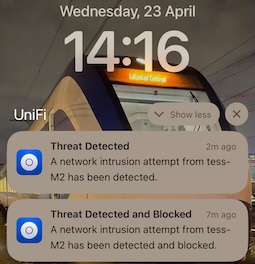

I was doing a few Burp Suite labs on Hack The Box earlier today. I noticed that one particular test with Intruder kept getting stuck after the second attempt. Only after restarting the lab VM on a new IP did my test start again, only to get blocked again.

It was only later, when I looked at my phone, that I put one and one together.

The lab VMs are not behind Hack the Box's VPN, they're public on the Internet. Thus my tests weren't going through the lab VPN, but they were going straight through my router.

The router with an IDS+IPS.

Unifi was blocking my "hacking". :D

kilala.nl tags: sysadmin,

View or add comments (curr. 0)

2025-01-01 00:00:00

Kilala.nl is a personal, private website. It is not operated by a corporation or a company.

The codebase is a private project of Tess Sluijter-Stek. The website runs on PaaS infrastructure owned by Dreamhost.com.

Neither Dreamhost nor Tess Sluijter-Stek authorize any third party to perform security assessments on this website, nor on any of its constituting components.

Security issues, abuse and the likes may be reported by email, per RFC 9116 (security.txt). But acknowledgements or compensation will not be provided.

kilala.nl tags: sysadmin,

View or add comments (curr. 0)

2024-11-28 17:34:00

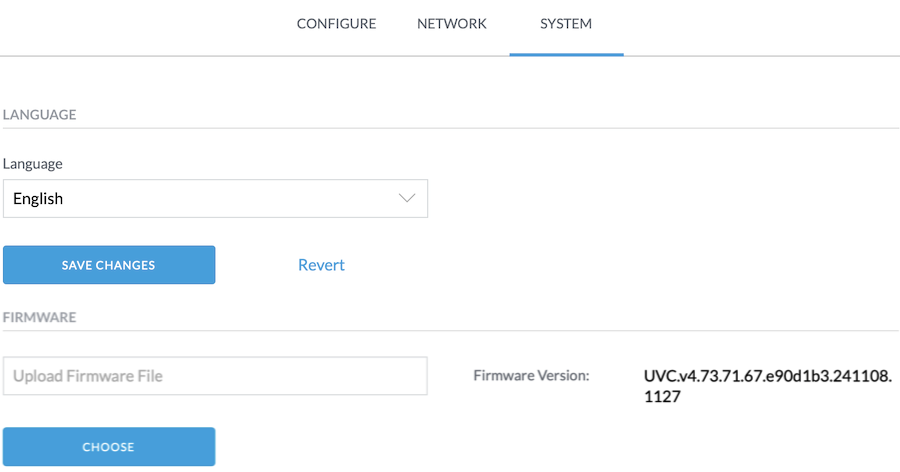

Earlier this month I bought an additional Unifi Flex G3 camera, for our security setup.

Adoption of the camera into the network went perfectly fine and it started streaming across the site-to-site VPN immediately. The image quality was bad though and judging by the sticker on the box, the camera had been packages two years ago. It's an old model after all.

The Unifi Protect app quickly identified that the camera had very, very outdated firmware. I don't even remember what version it was, something like 4.17.x. And it offered to apply the latest update! Lovely!

Except that it didn't.

Not even after I brought the camera back on-site to where the Protect appliance is installed. The updates weren't happening. There were no error messages... Just, no updates applied.

I have mixed feelings about Ubiquiti. On the one hand they have great documentation and a decent forum. On the other hand, there's so much activity on those forums that finding answers becomes pretty hard.

Well, after digging and digging, I found a suggestion to just download the latest available update manually from the UVC-G3-Flex product page. You can then open a browser, and browse to web interface of your camera. Yes, the camera has its own web UI!

For example, go to https://10.0.30.210/camera/system

You will need to login! The username is "ubnt" and the password can be found in the Unifi Protect app. But not in the mobile app on your phone! You need the webapp! Go to https://unifi.ui.com/consoles then visit your Protect console and go into Protect > Settings > General. There you will find Recovery Code. That field has the password you need!

Wow, that's hidden away!

Once you login to the camera UI, you can go into the System tab, where you can upload a new firmware version. This finally took me from 4.17 to 4.30, which is the latest version available on the website.

From there on out, the theory is that Protect will do the next update automatically.

But it didn't. I still had the same symptoms! Updates were not being applied and no error messages appeared!

So I hopped back into the camera web interface, to download the support logs. That gave me hundreds of lines of application and Linux logging. :) Among those lines I found some key error messages!

{"anonymous_controller_id":"REDACTED","controller_version":"5.1.57","anonymous_device_id":"REDACTED","version":"4.30.0","model":"UVC-G3-Flex","board_rev":12,"is_default":false}1732818347 P6 360,598 ctl[669]: ubnt_ctlserver[669]: trace.put_trace(): https://REDACTED:7444/internal/device/traces1732818347 P6 360,882 ctl[669]: ubnt_ctlserver[669]: trace.put_trace(): http_code = 2021732818947 P4 960,847 ctl[669]: Firmware validation failed, uri=https://REDACTED:7444/internal/update?platform=s2l&product=uvc&updateType=firmware&version=4.73.71, status=/tmp/bin/precheck-mergeall: .: line 3: can't open '/tmp/hooks/ubnt_utils.sh': No such file or directory

This suggests a few things:

This suggests that firmware version 4.30.0 for the UVC-G3-Flex is too outdated to actually upgrade to 4.73! That's problematic!

The logs also gave me an idea!

What if I just download that update file using Curl or WGet, from the console onto my laptop? And what if I then go back into the camera's onboard web interface and just upload that file?

Well, that worked! :D

The G3 Flex is now happily running firmware 4.73.

kilala.nl tags: sysadmin,

View or add comments (curr. 0)

2024-11-26 15:02:00

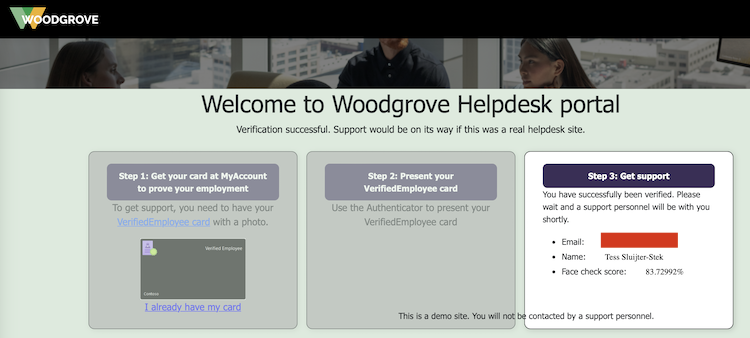

Ever since LinkedIn introduced their Verifications, they've been constantly pushing all their members to get verified because (of course!) other members will be more likely to trust you. Since the verification process generally involves using Persona to read your passport, a lot of people are flat out refusing to do so. Be it for privacy, be it for deadnaming or for other reasons, there's plenty of discussion about red flags.

Reading through LinkedIn's verification options, I noticed there's an alternative: employment verification, where your employer will confirm that you are indeed in their service. Interesting!

Since I am self-employed and I own an actual company, does that mean I can verify myself? Why yes, yes it does.

I did some reading and pieced together some documentation:

The process I followed is as such:

*: If you do not have a photograph of yourself setup under My Account, Face Check will fail. It will give you an error message like "No face detected in Verified ID. Use a different Verified ID with a better photo and try again.".

After setting myself up with a Verified ID, I used Microsoft's Woodgrove public test app. Here, I clicked the option that I have a Verified ID, which now gives me a QR to scan. I do so with the Azure MFA app, which prompts me if I indeed want to share my identity.

The Azure MFA app then starts the front-facing camera, makes a whole bunch of photographs and then uses Microsoft's AI to compare it to the photograph that's setup in my Verified ID. This is why I earlier ran into the "No face detected" error message: my account avatar was the Unixerius logo instead of my actual face.

And it works!

Next up: I have submitted a request to LinkedIn / Microsoft, as per the instructions detailed here. I hope that they will in fact enable Workplace Verification for Unixerius.

This has been an educational day!

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2023-04-21 18:37:00

It's no secret that I use Ubiquiti equipment for my networking. My office runs on a UDM Pro, which has been great for me.

The UDM Pro performs well and stable, it has a great feature set and it's easy to manage (for someone who wants to spend little time managing their network). Heck, even site-to-site VPN for my security cameras was simple!

My main WAN connection comes from MAC3Park, my housing company. They recently had an outage on my Internet connection, which lasted a few days. That messes with my backups and a few of my business processes, so I want to have at least some form of alternative in place.

Luckily, the UDM Pro also makes it dead simple to configure automatic failover or even load balancing across two WAN connections! It really is amazingly simple! Or it should be, as we'll see in a bit.

As a second Internet connection, I looked into getting 4G/5G from my mobile provider. Ubiquiti have their own LTE/4G/5G solution, which looks awesome but is a bit expensive. For half the price, I got a Teltonika RUT241 aimed at IoT solutions.

Sure, the LAN port on the RUT241 is slower (10/100Mbit), but seeing how the 4G connection averages around 20MBit that'll be fine. That's also where the "should be simple" I mentioned earlier comes in.

The RUT241 worked great with my laptop, but hooking it up to the SFP RJ45-module on the UDM Pro it just wouldn't go. No amount of changing settings would make it work. Very odd! There was no DHCP lease and even a statically assigned IP wouldn't let me connect to the Teltonika.

Turns out that, upon closer inspection, my vendor sent me the wrong SFP module :) I'd ordered the 1G model (which does 10/100/1000), but they sent me the 2.5G (which does 1000/2500/10000). The latter will not work with the Teltonika.

Time to get that SFP replaced by my vendor and we'll be good to go!

EDIT:

Or even better! I could just switch my cabled connection from MAC3Park (which is 1G) to port 10 and switch the Teltonika to port 9 (which natively does 100/1000). So basically, switch the definitions of WAN1 and WAN2 around!

EDIT2:

That worked.

I made port 9 WAN2 and port 10 WAN1. I switched the cables around and now port 9 happily runs at 100Mbit, connected to the Teltonika.

Even nicer: in bridge mode, port 9 gets the 4G IP address so it's perfectly accessible as intended. But in that same bridge mode, the RUT241 remains accessible on its static, private IP as well so you can still access the admin web interface.

So if, for example, my internal LANs are 10.0.10.0/24 and the Teltonik's private IP is 10.0.200.1, I've setup a traffic management route which says that 10.0.200.0/24 is accessible via WAN2. That way I can manage the Teltonika web interface, from inside my office LAN, even when it's in bridge mode. Excellent!

EDIT3:

I tested the setup!

Setting the UDM Pro to failover between the connections works very well. Within 60 seconds, Internet-connectivity was restored. It does seem that the dynamic DNS setup does not quickly switch over, so a site-to-site VPN will fail for a lot longer.

Setting the UDM Pro to load balancing didn't work so well. The connection remained down after I pulled WAN1.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2022-06-15 06:35:55

Recently I've been thinking back about old computing gear I used to own, or worked on in college. Nostalgia has a tendency to tint things rose, but that's okay. I get pangs of regret for getting rid of all my "antiques" (like the Televideo vt100 terminal, the 8088 IBM clone, my first own computer the Pressario CDS524) but to paraphrase the meme: "Ain't nobody got room fo' all that!"

Still, it was really cool to run RedHat 5 on the Compaq and having the Televideo hang off COM1 to act as extra screen and keyboard.

Anyway... that blog post I linked to, regarding RH5, also mentions OS-9. OS-9 was (is, thanks to NitrOS9). It was an OS ahead of its time, with true multi-user and multi-processing, with realtime processing all on at the time relatively affordable hardware. It had MacOS and Windows beat by at least a decade and Linux was but a glint in the eyes of the future.

I've been doing some learning! In that linked blog post I referred to a non-descript orange "server". Turns out, that's the wrong word to use!

In reality that was a VMEbus "crate" (probably 6U) with space for about 8-10 boards. Yes it used Arcnet to communicate with our workstations, but those also turn out to be VMEbus "crates", but more like development boxen with room for 1-2 boards in a desktop box.

Looking at pictures on the web, it's very likely that the lab ran OS-9 on MVME147 boards that were in each of the crates.

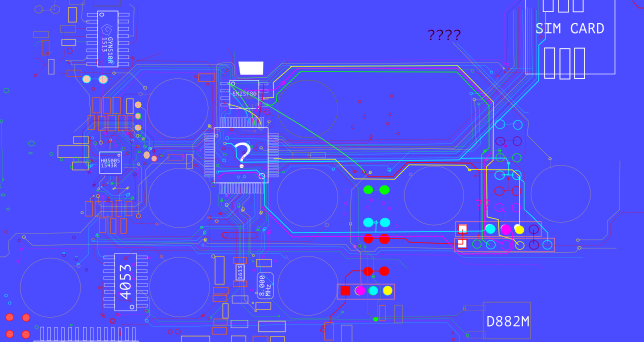

Color me surprised to learn that VMEbus and its successors are still very much in active use, in places like CERN but also in the military! But also in big medical gear, like this teardown of an Afga X-Ray machine shows.

Cool stuff! Now I wanna play with an MC68k box again. :)

kilala.nl tags: work, studies, sysadmin,

View or add comments (curr. 1)

2022-01-23 09:25:00

I've been using Vagrant for a lot of my quick tests and my classes for a while now. A few weeks ago, my old Vagrantfile configurations stopped working, with Vagrant and Virtualbox throwing errors like these:

There was an error while executing `VBoxManage`, a CLI used by Vagrant for controlling VirtualBox.The command and stderr is shown below.

Command: ["hostonlyif", "ipconfig", "vboxnet0", "--ip", "192.168.33.1", "--netmask", "255.255.255.0"]

Stderr: VBoxManage: error: Code E_ACCESSDENIED (0x80070005) - Access denied (extended info not available)

VBoxManage: error: Context: "EnableStaticIPConfig(Bstr(pszIp).raw(), Bstr(pszNetmask).raw())" at line 242 of file VBoxManageHostonly.cpp

Or, in a more recent version of Virtualbox:

The IP address configured for the host-only network is not within the allowed ranges. Please update the address used to be within the allowed ranges and run the command again.

Address: 192.168.200.11

Ranges: 192.168.56.0/21

Valid ranges can be modified in the /etc/vbox/networks.conf file.

A search with Google shows that a few versions ago VirtualBox introduced a new security feature: you're now only allowed to whip up NAT networks in specific preconfigured ranges. Source 1. Source 2. Source 3.

The work-arounds are do-able.

While the prior is more correct, I like the latter since it's a quicker fix for the end-user.

BEFORE:

stat1.vm.network "private_network", ip: "192.168.200.33"

AFTER:

stat1.vm.network "private_network", ip: "192.168.200.33", virtualbox__intnet: "08net"

Apparently it's enough to give Virtualbox a new, custom NAT network name.

kilala.nl tags: work, sysadmin, studies,

View or add comments (curr. 0)

2021-02-17 20:30:00

When using a "shell" executor with gitlab-runner you may run into the following errors, when trying to upload artifacts to Gitlab.

ERROR: Uploading artifacts as "archive" to coordinator... error error=couldn't execute POST against https://gitlab.corp.broehaha.nl/api/v4/jobs/847/artifacts?artifact_format=zip&artifact_type=archive: Post https://gitlab.corp.broehaha.nl/api/v4/jobs/847/artifacts?artifact_format=zip&artifact_type=archive: proxyconnect tcp: tls: first record does not look like a TLS handshake

The issue here is that your "gitlab-runner" user account has picked up a http proxy configuration that's not sitting well with it.

In my homelab, the proxy settings are configured for all users using Ansible, through "/etc/profile". For the "gitlab-runner" user that apparently may be problematic when trying to talk to the internal Gitlab server. Quick and dirty work-around: unset the proxy settings from your environment.

echo "unset http_proxy; unset https_proxy" >> ~/.bashrc echo "unset http_proxy; unset https_proxy" >> ~/.profile

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2021-02-07 22:08:00

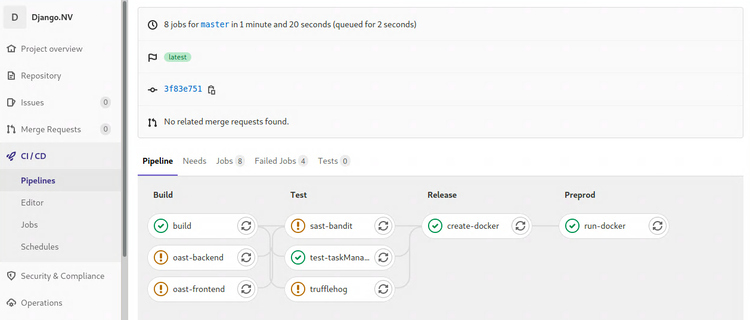

I'll talk about it in more detail at a later point in time, but I'm about a week's worth into the Certified DevSecOps Professional training by Practical DevSecOps. So far my impressions are moderately positive, more about that later.

In the labs we'll go through a whole bunch of exercises, applying a multitude of security tests to a Gitlab repository with a vulnerable application. Most of the labs involve nVisium's sample webapp django.nV.

Having reached the half-way point after that one week, I had not encountered two crucial parts of the DevOps / CICD pipeline which I'm not at all familiar with. We're applying all kinds of tests, but we never did the steps you'd expect before or after: creating the artifacts, deploying and running them. As I've said before, I'm #NotACoder.

Instead of focusing on one of the next chapters, today I spent all day improving my Gitlab and Docker install by applying all the required trusts and TLS certificates. This, in the end, enabled me to create, push, pull and run a Docker image with the django.nV web app.

If anyone's interested: here's my Dockerfile and gitlab-ci.yml that I'd used in my homelab. You cannot just throw them into your own env, without at least changing username, passwords and URLs. You'll of course also need a Docker host with a gitlab-runner for deployment.

Note: The Docker deploy and execute steps show a bad practice, hard-coded credentials in a pipeline configuration. Ideally this challenge should be solved with variables or even better: integration with a vault like Azure Vault, PasswordState or CyberArk PasswordVault. For now, since this is my homelab, I'll leave them in there as a test for Trufflehog and the other scanners ;)

kilala.nl tags: work, sysadmin, studies,

View or add comments (curr. 0)

2021-02-07 19:45:00

My homelab runs its own PKI and most servers and services are provided with correct and trusted certificates. It's a matter of discipline and of testing as close to production as possible.

Getting Gitlab on board is a fairly okay process, but takes a bit to figure out.

So my quick and dirty way of getting things set up:

Now, you also want Gitlab and your runners to trust your internal PKI! So you will need to ask your PKI admin (myself in this case) for the CA certificate chain. You will also need the individual certificates for the root and intermediary PKI servers.

Don't forget to restart Gitlab itself, the runners and Docker after making these config changes!

You can then perform the following tests, to make sure everything's up and running with the right certs.

kilala.nl tags: work, sysadmin, studies,

View or add comments (curr. 0)

2021-02-06 21:59:00

Durning the CDP class, one of the tools that gets discussed is Trufflehog. TLDR: yet another secrets scanner, this one built in Python.

I ran into an odd situation running Trufflehog on my internal Gitlab CICD pipelines: despite running it against the intentionally vulnerable project Django.nv, it would come back with exit code 0 and no output at all.

Why is this odd? Because it would report a large list of findings:

But whenever I let Gitlab do it all automated, it would always come up blank. So strange! All the troubleshooting I did confirmed that it should have worked: the files were all there, the location was recognized as a Git repository, Trufflehog itself runs perfectly. But it just wouldn't go...

I still don't know why it's not working, but I did find a filthy workaround:

trufflehog:

stage: build

allow_failure: true

image: python:latest

before_script:

- pip3 install trufflehog

- git branch trufflehog

script:

- trufflehog --branch trufflehog --json . | tee trufflehog-output.json

artifacts:

paths: [ "trufflehog-output.json" ]

when: always

If I first make a new branch and then hard-force Trufflehog to look at that branch locally, it will work as expected.

kilala.nl tags: work, sysadmin, studies,

View or add comments (curr. 0)

2020-12-17 08:39:00

I've been studying on and off for the EX407 Ansible exam for ... lemme check... 1.8 years now. Started in March of 2019, hoping to renew my RHCE in time, but then I kept on getting distracted. Two certs and three other studies further, I still need to pass EX407 to renew my RHCE. Way to go on that discipline! ( ; ^_^)

Anywho, there's a few resources that proved to be helpful along the way; thought I'd share them here.

kilala.nl tags: work, sysadmin, studies,

View or add comments (curr. 0)

2020-12-05 22:59:00

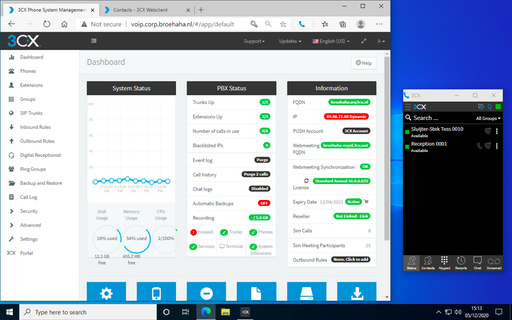

In order to simulate a "work-from-home" (WFH) situation in the lab, I'm very happy to test the 3CX web client. Their webapp supports a lot of the productivity features you'd expect and works with a browser extension (Edge and Chrome) to make actual calls. No need to install a soft-phone application, just grab the browser extension!

The RDP protocol supports the redirection of various types of hardware, including audio input and output. This requires that you enable this for your target host (or in general), for example in Royal TSX you would edit the RDP connection, go to Properties > Redirection and put a check in the box for Record audio from this computer. Also select Bring audio to this computer.

With a Windows target host it'll now work without a hassle.

Linux is a different story, but that's because the xRDP daemon needs a little massaging. Specifically, you will need to build a module or two extra for PulsaAudio. This isn't something you can easily "apt install", but the steps are simple enough. Full documentation over here.

After building and installing the modules, you'll need to logout and log back in. After that playing audio works and PulseAudio will have detected your system's microphone as well.

kilala.nl tags: homelab, sysadmin,

View or add comments (curr. 0)

2020-12-05 16:15:00

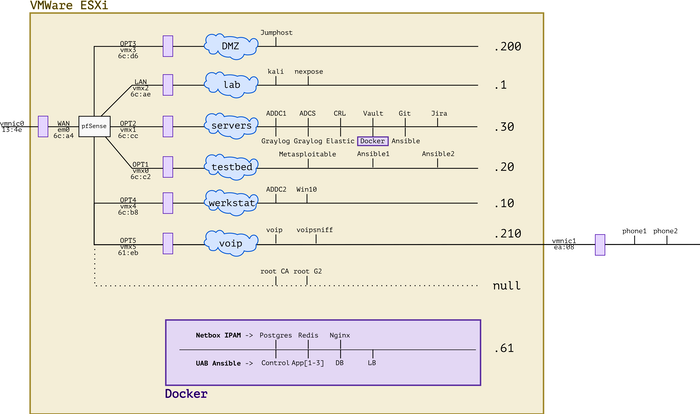

It's been a busy year! Between adding new hardware, working with Ansible and messing with forensics and VOIP, the lab has evolved. I'm very lucky to have all of this at my disposal and I'm grateful to everybody's who's helped me get where I am today. :)

kilala.nl tags: work, sysadmin, homelab,

View or add comments (curr. 0)

2020-12-05 15:25:00

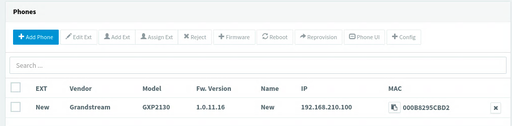

Just yesterday, a lucrative dumpster dive netted me two brandnew IP desk phones, very spiffy Grandstream GPX2130 models. Because studying for my upcoming Ansible exam isn't much fun (OMG two weeks!!), procrastination struck!

Let's add VOIP to my simulated company Broehaha in my homelab!

Until this weekend I had zero experience with VOIP, SIP and the likes beyond using Cisco phones as an end-user. I'd heard plenty of colleagues talk about Asterisk and I remember hacking an Asterisk server in the PWK labs at Offensive Security, but that's about as far as my exposure went.

Wanting to save time and to simulate an actual company, I quickly gave up on both Asterisk and FreeSwitch. As the meme goes: "Ain't nobody got time fo' that!"

A little search further led me to 3CX, a commercial PBX solution that provides a free edition for (very limited) small environments. They offer a Debian-based soft-appliance that you can deploy from ISO anywhere you like.

So:

Last night I spent from 2200-0100 mucking around with 3CX because no matter what I tried, the GXP2130 would not show up on the admin UI. The phone's in the network just fine and could also talk to 3CX, but there were a few steps missing.

Continuing this morning, I used tcpdump and other tools to ascertain that:

After lunch, things fell into place :)

So... I upgrade the phone's firmware in four steps, using an on-prem update server. Then, after resetting the phone to factory defaults it showed up just fine and I could add it to one of my extensions!

The cool part is that 3CX comes with a web UI for end-users, that also works with their browser extension for Chrome or Edge. Now I can simulate a working-from-home situation, with one user on a Windows 10 VM calling the "reception" on the Grandstream phone. Or vice versa.

kilala.nl tags: work, sysadmin, homelab,

View or add comments (curr. 0)

2020-12-05 13:43:00

With many thanks to my friends at ITVitae and some dumpster diving I snagged two brand-new Grandstream GXP2130 IP phones, to practice VOIP in my homelab. They're pretty sexy phones! Nice build quality and a very decent admin interface: a great first step into the world of VOIP / SIP.

Out of the box, these two phones came with the dated 1.0.7.25 firmware. No matter what I tried, they refused to upgrade to the current version 1.0.11.16. Pointing them at the Granstream firmware site? Nothing. Pointing them at a local web server with the 1.0.11.16 firmware? Nothing.

After a bit of searching, I found a helpful thread on the GS support forums that suggests that the firmware version gap is simply too great. We need to apply a few of the in-between versions, one by one.

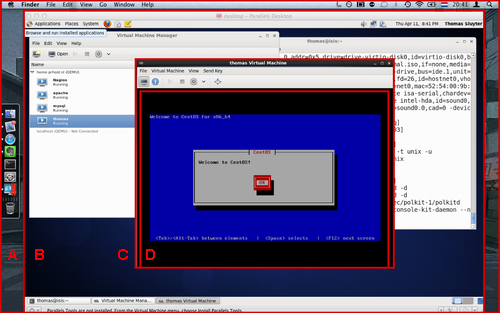

As a work-around I built my own firmware upgrade server, in the VOIP network segment of my homelab. A simple CentOS 7 box with Apache. I then did the following:

cd /tmp

wget http://www.grandstream.com/sites/default/files/Resources/RingTone.zip

wget http://firmware.grandstream.com/Release_GXP2130_1.0.7.97.zip

wget http://firmware.grandstream.com/Release_GXP2130_1.0.8.56.zip

wget http://firmware.grandstream.com/Release_GXP2130_1.0.9.135.zip

wget http://firmware.grandstream.com/Release_GXP2130_1.0.11.3.zip

wget http://firmware.grandstream.com/Release_GXP2130_1.0.11.16.zip

unzip RingTone.zip

for FILE in $(ls Release*zip); do unzip $FILE; done

cd /var/www/html

sudo mkdir 7 8 9 11

sudo cp /tmp/ring* 7/; sudo cp /tmp/Rel*.7.*/*bin 7/

sudo cp /tmp/ring* 8/; sudo cp /tmp/Rel*.8.*/*bin 8/

sudo cp /tmp/ring* 9/; sudo cp /tmp/Rel*.9.*/*bin 9/

sudo cp /tmp/ring* 11/; sudo cp /tmp/Rel*.11.*/*bin 11/

sudo chmod -R o+r *

From there on out, run a "sudo tail -f /var/log/httpd/access.log" to see if the phone is actually attempting to pick up the relevant update files.

Then, on the phone, login as "admin" and browse to Maintenance > Upgrade and Provisioning. Set the access method to HTTP. As the Firmware Server Path set the IP address of the newly built upgrade server (e.g. 192.168.210.100), followed by the version path. We will change this path for every version upgrade.

For example:

First update to 1.0.7.97: set the path, click Save and Apply, then at the top click Provision. You should see the phone downloading the firmware update in "access.log". Once the phone has rebooted, check the web interface for the current version number.

Then "lather, rince and repeat" for each consecutive version. After 7, upgrade to 8, then to 9, then to 11 (this works without issues). In the end you will have a Grandstream phone running 1.0.11.16, after starting at 1.0.7.25.

Afterwards: don't forget to reset the phone to factory defaults, so it will correctly join your PBX for auto-provisioning.

kilala.nl tags: work, sysadmin, homelab,

View or add comments (curr. 0)

2020-12-02 19:16:00

It's been a while since I've worked in my homelab, between my day-job and my teaching gig there's just been no time at all. But, with my EX407 around the corner it's time to hammer Ansible home!

Of course, it's tempting to get sidetracked! So when Tomas' Lisenet practice exam for EX407 suggests I need five VMs with RHEL, I go and find a way to build those post-haste. Now that I've been playing with Vagrant more often, that's become a lot easier!

First, there's a dependency: you will need to download and install a recent version of VMware's OVFTool. Make sure that its binary is in your $PATH.

After that, JosenK's Vagrant plugin for VMware ESXi makes life so, so easy! On my Linux workstation it was as easy as:

$ sudo apt install vagrant

$ vagrant plugin install vagrant-vmware-esxi

$ mkdir vagrant-first-try; cd vagrant-first-try

$ vagrant init

$ vi Vagrantfile

After which the whole Vagrantfile gets replaced as follows:

nodes = {

"vagrant1.corp.broehaha.nl" => ["bento/centos-8", 1, 512, 50 ],

"vagrant2.corp.broehaha.nl" => ["bento/centos-8", 1, 512, 50 ],

"vagrant3.corp.broehaha.nl" => ["bento/centos-8", 1, 512, 50 ],

"vagrant4.corp.broehaha.nl" => ["bento/centos-7", 1, 512, 50 ],

"vagrant5.corp.broehaha.nl" => ["bento/centos-7", 1, 512, 50 ],

}

Vagrant.configure(2) do |config|

nodes.each do | (name, cfg) |

box, numvcpus, memory, storage = cfg

config.vm.define name do |machine|

machine.vm.box = box

machine.vm.hostname = name

machine.vm.synced_folder('.', '/Vagrantfiles', type: 'rsync')

machine.vm.provider :vmware_esxi do |esxi|

esxi.esxi_hostname = '192.168.0.55'

esxi.esxi_username = 'root'

esxi.esxi_password = 'prompt:'

esxi.esxi_virtual_network = "Testbed"

esxi.guest_numvcpus = numvcpus

esxi.guest_memsize = memory

esxi.guest_autostart = 'true'

esxi.esxi_disk_store = '300GB'

end

end

end

end

To explain a few things:

Any requirements? Yup!

kilala.nl tags: work, sysadmin, homelab,

View or add comments (curr. 1)

2020-11-10 19:52:00

For a while now, I've been using Git + SSH on Windows 10 and I've been very content about the whole setup.

Git was installed using Chocolatey, just because it's easy and takes care of a few things for you. But it turns out it was a little bit "too much" in the background, as it turns out.

I wanted to move my SSH files (private key, known_hosts etc) to OneDrive, thus changing the path to the files. I just couldn't figure out where the SSH client configuration for the Git from Chocolatey was tucked away. This Git does not use the default OpenSSH client delivered by Windows 10 C:\windows\system32\OpenSSH\ssh.

An hour of searching made me realize that "git.install", the package from Choco, includes a mini-Unix-like environment. It's not Git on Windows: it runs on MINGW-W64.

I found the following files, which define the behavior of the Choco-installed Git + SSH:

In the latter file, you can set UserKnownHostsFile and IdentityFile to set the file path for the private key and known_hosts.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2020-11-09 20:53:00

A while back I wrote detailed instructions on how we managed to get VBox to run on Windows 10 with Hyper-V remaining enabled. This required a little tweaking, but it allowed us to retain all of the Win10 security features offered by Hyper-V.

Recently the VirtualBox team released version 6.1.16 which includes a number of improvements aimed at Windows 10 and "Windows Hypervisor Platform".

You now no longer need any of the tweaks I described earlier! Vanilla VirtualBox 6.1.16 runs on top of Hyper-V and WHP without further issues. SHA2 hashing works well and GCrypt no longer needs to have its acceleration disabled! This makes life so much easier!

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2020-10-06 19:30:00

EDIT: The tweaks outlined in this blog post are no longer needed. Read this update!

Sometimes you just have an odd need or craving! You just have to have some spicy curry udon after midnight! You just have to get an old RAID controller to work in your homelab! Or in this case: you just really have to get VirtualBox and Hyper-V to play nice on Windows 10.

That's something that just wouldn't fly until recently. But now it'll work!

I would like to extend my warmest thanks to my colleage Praveen K-P, who worked with me to figure all of this out. =)

These instructions are a work-in-progress and the solution is not 100% rock-solid.

Some mathematical functions, such as SHA2 or CRC, may fail depending on the OS you run in the VM. This means that outright installing an OS from DVD or ISO may fail during extraction: SHA1 or SHA2 checksums won't match up and the installer will refuse to continue. This is likely caused by the layered CPU virtualization and is under research with the VirtualBox team.

Also, please be careful when choosing base images for your VirtualBox VMs! Do not assume that you can trust every VM image on the Vagrant repositories! Only install images from trusted providers such as:

Installing untrusted base images may lead to malware infections or worse.

Kali Linux is one of the distributions whose installation fails due to the caveat involving mathematical functions. So let's use Vagrant instead, which pulls pre-built images from an online repository.

Open Powershell. Run the following commands:

cd $HOME

mkdir Vagrant; cd Vagrant;

vagrant init kalilinux/rolling

Before continuing, edit the "vagrantfile" file (e.g. with Notepad) and replace this line:

config.vm.box = "kalilinux/rolling"

With the following configuration. Edit the amount of RAM and CPUs to your liking. Me, I like 6GB and 3 cores.

config.vm.define "kali" do |kali|

kali.vm.box = "kalilinux/rolling"

kali.vm.hostname = "haxor"

kali.vm.provider "virtualbox" do |vb|

vb.gui = true

vb.memory = "6144"

vb.cpus = 3

vb.customize [ "modifyvm", :id, "--paravirtprovider", "minimal" ]

end

kali.vm.synced_folder '.', '/vagrant', disabled: true

kali.vm.provision "shell", inline: <<-SHELL

echo "Here we would install..."

[[ ! -d /etc/gcrypt ]] && mkdir /etc/gcrypt

[[ ! -f /etc/gcrypt/hwf.deny ]] && echo "all" >> /etc/gcrypt/hwf.deny

SHELL

end

Save the configuration file and now run the following in Powershell:

vagrant up kali

The init-command sets up your "Vagrant" directory and basic configuration file. By editing the "vagrantfile" we can change a lot of the behavior, including the way Kali perceives the VirtualBox hypervisor. We also tweak GCrypt, so it will refuse to try hardware accellerated cryptography. Both are required to make hashing and other maths work better.

The up-command actually starts the build of the VM, after which it is booted. The first installation will take a few minutes, after that you can just manage the VM using the VirtualBox user interface.

The Kali Linux Vagrant build includes the full graphical user interface! But you can also ssh -P 2222 vagrant@localhost to login to the VM. Be sure to create your own account and to change all passwords!

Your Linux distribution may have problems performing SHA2 calculations correctly. According to this source, it’s “Because apt use sha256 method from libgcrypto20, but optimized too much. We can deny this opt. using configuration file /etc/gcrypt/hwf.deny.”

$ sudo bash

# mkdir /etc/gcrypt

# echo all >> /etc/gcrypt/hwf.deny

In addition, we learned that in our nested situation (VirtualBox on top of Hyper-V) it may be a good idea to change your VM's "paravirtualization interface" from "Normal" to "Minimal". #TIL that this is not about how VBox provides better performance, but about what paravirtualization information is passed to the guest OS. In my case this change did fix hashing problems. This change can be made manually by editing the VM settings in VirtualBox (VM → Settings → System → Acceleration → Paravirtualization interface), or in the Vagrant file:

vb.customize [ "modifyvm", :id, "--paravirtprovider", "minimal" ]

Vagrant.configure("2") do |config|

config.vm.define "kali" do |kali|

kali.vm.box = "kalilinux/rolling"

kali.vm.hostname = "haxor"

kali.vm.network "forwarded_port", guest: 22, host: 2222, host_ip: "127.0.0.1"

kali.vm.network "forwarded_port", guest: 3389, host: 2389, host_ip: "127.0.0.1"

kali.vm.provider "virtualbox" do |vb|

vb.gui = true

vb.memory = "6144"

vb.cpus = 3

vb.customize [ "modifyvm", :id, "--paravirtprovider", "minimal" ]

end

kali.vm.synced_folder '.', '/vagrant', disabled: true

kali.vm.provision "shell", inline: <<-SHELL

echo "Here we would install..."

[[ ! -d /etc/gcrypt ]] && mkdir /etc/gcrypt

[[ ! -f /etc/gcrypt/hwf.deny ]] && echo "all" >> /etc/gcrypt/hwf.deny

SHELL

end

config.vm.define "centos8" do |centos8|

centos8.vm.box = "centos/8"

centos8.vm.hostname = "centos8"

centos8.vm.box_check_update = true

centos8.vm.network "forwarded_port", guest: 22, host: 2200, host_ip: "127.0.0.1"

centos8.vm.provider "virtualbox" do |vb|

vb.gui = false

vb.memory = "1024"

vb.cpus = 1

vb.customize [ "modifyvm", :id, "--paravirtprovider", "minimal" ]

end

centos8.vm.provision "shell", inline: <<-SHELL

echo "Here we would install..."

[[ ! -d /etc/gcrypt ]] && mkdir /etc/gcrypt

[[ ! -f /etc/gcrypt/hwf.deny ]] && echo "all" >> /etc/gcrypt/hwf.deny

SHELL

centos8.vm.synced_folder '.', '/vagrant', disabled: true

end

end

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2020-08-09 19:39:00

It's hilarious how stuck in one's ways one can get. I mean, I've always typed:

netstat -a | grep LISTEN | grep ^tcp

While prepping slides for my students, imagine my mirth when I learned "there's a flag for that". Man, it pays to read man-pages.

netstat -l4

ss -l4

#EternalNewbie 💖

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2020-08-01 20:21:00

The Dell R410 in my homelab has served me very well so far! With a little upgrade of its memory it's run 20 VMs without any hassle. Finding this particular configuration when I did (at a refurbishing company) was a lucky strike: a decent price for a good pair of Xeons and two large disks.

I've been wanting to expand my homelab, to mess around with vMotion, Veeam and other cool stuff. Add in the fact that I'd love to offer "my" students a chance to work with "real" virtualization (using my smaller R410) and you've got me scouring various sources for a somewhat bigger piece of kit. After trying a Troostwijk auction and poking multiple refurbishers I struck gold on the Tweakers.net classified ads!

Pictured above is my new Dell R710, the slightly beefier sister of the R410. It has space for more RAM, for more disk drives and most importantly (for my own sanity): it's a 2U box with larger fans which produces a lot less noise than the R410. The seller even included the original X5550 CPUs seperately.

So! From the get-go I decided to Frankenstein the two boxes, so I could actually put the R410 to use for my students while keeping a bit more performance in my homelab.

Moving that RAID1 set from the R410 to the R710 was an exciting exercise!

I really did not want to loose all of my VMs and homelab; I've put a year into the environment so far! Officially and ideally, I would setup VMware ESXi on the R710 and then migrate the VMs to the new host. There are many methods:

Couldn't I do it even faster? Well sure, but you can't simply move RAID sets between servers! Most importantly: you'll need similar or the same RAID controllers. In a very lucky break, both the R410 and the R710 have the Dell/LSI Perc 6i. So, on a wish and a prayer, I pludged the RAID set and told the receiving Perc 6i to import foreign configuration. And it worked!

After booting ESXi from the SD card, it did not show any of the actual data which was a not-so-fun surprise. Turns out that one manual re-mount of the VMFS file system did the trick! All 24 VMs would boot!

So far she's a beaut! Now, onwards, to prep the R410 for my students.

kilala.nl tags: sysadmin, work,

View or add comments (curr. 0)

2020-06-13 22:20:00

One Reddit user suggests that, while my suggested way of working is easier than others, it may also lead to "bricking" of servers: literally rendering them unusable, by applying firmware updates out of order.

Their suggestion is to instead use the SUU (Server Update Utility) ISO image for the server in question, which may be run either from a booted Windows OS, or through the LCM (Life Cycle Manager).

More information about the SUU can be found here at Dell.

Also, if you take a look at Dell's instruction video about using the SUU ISO from the LCM, I think we can all agree that this in fact the easiest method bar none.

EDIT: If it weren't for the fact that the old LCM firmware on the R410 cannot read the SUU files. So you have to use this with Windows or CentOS.

If you want to skip all the blah-blah:

Early in 2019 I purchase a Dell R410, part of Dell's eleventh generation (11G) server line-up from 2010/2011. Since then I've had a lot of fun growing and maintaining my homelab, learning things like Ansible and staying in touch with Linux and Windows administration.

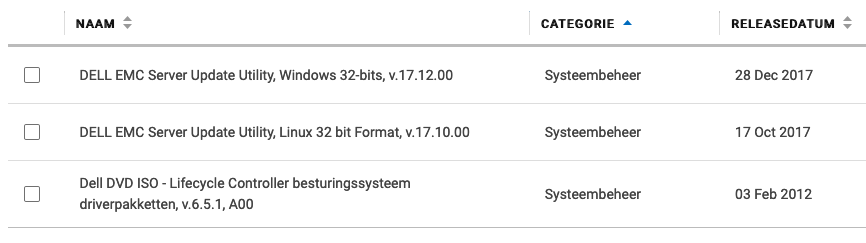

One task system administrators commonly perform, is the upgrading of firmware: the software that's built into hardware to make it work. If you check out the list of available firmware options for the R410, you'll see that quite a lot of that stuff goes into one simple server. Imagine what it's like to maintain all of that stuff for a whole rack, let alone a data center full of those things!

In the case of the R410, support options from Dell are slipping. While many homelabs (and some enterprises) still rock these now-aging servers, the vendor is slowly decreasing their active support.

In my homelab I have tackled only a small number of firmware updates and I'll quickly discuss the best/easiest way to tackle each. In some cases it took me days of trying to figure them out!

Dell's 11G systems (and later) include the Life Cycle Manager (LMC) which makes firmware updates a lot easier. You reboot your server into the USC (Unified System Configurator), launch the updater and pick the desired firmware updates.

Unfortunately, the bad news is that somewhere in 2018 Dell dropped the 11G updates from their "catalogs". You can still use the following steps to make your 11G system check for updates, but it won't find any. You can check the catalogs yourself at https://ftp.dell.com/catalog/. Mind you, based on this forum thread, the Dell ftp/downloads site hasn't been without issues over the years.

"No update is available. Make sure that the Windows(R) catalog and Dell(TM) Update Packages for Windows(R) are used."

There are no more updates for 11G systems available for LCM.

Technically it's possible to make your own internal clone of Dell's software update site. For a large enterprise, that's a great idea actually! Dell's recommended way of setting up a mirror to host updates for your specific systems, is to use the Repository Manager (DRM).

You could also use DRM to create a bootable USB stick that contains the updates you want, so the system can go and update itself, using LCM. Great stuff!

But you're still going to run into the same issue we discussed in the previous paragraph: 11G updates are no longer available through the catalogued repository. You can only get them from the Dell support site, as per below.

So for 11G, forget about DRM. For anything besides the iDRAC, you will need to boot an OS to update your firmware.

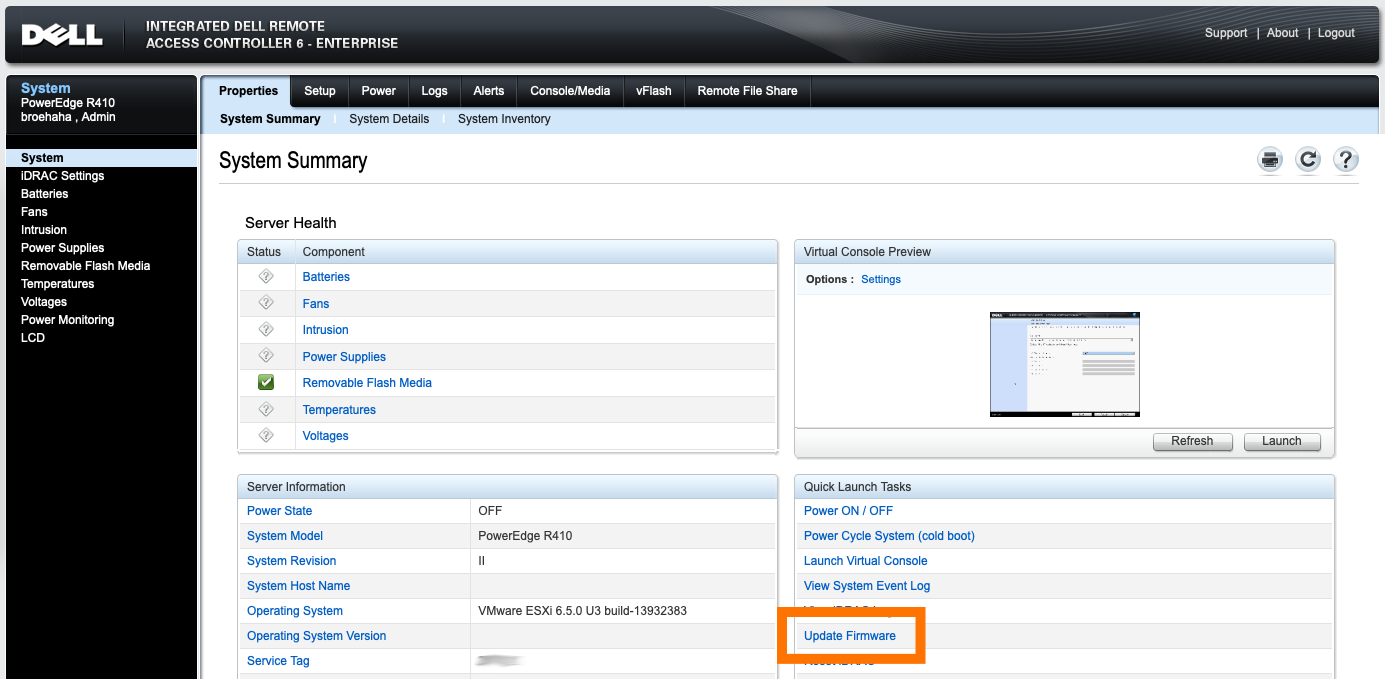

Updating the iDRAC integrated management system (if you have it) is the easiest task, assuming that you have the full Enterprise kit with the web GUI.

My R410 runs VMware ESXi which, while it's a Unix, is not supported to run Dell's firmware updates from. Dell support a plethora of Windows versions, a few other OSes and (for the 11G systems) RHEL 5 or 6 (Red Hat Enterprise Linux).

I first wanted to try CentOS 6 (a RHEL 6 derivative), because that's an OS I'm quite comfortable with. I grabbed an ISO for CentOS 6 Live, used dd to chuck it onto a USB stick and booted the OS. Running the BIOS and LCM updates worked fine.

However, the BMC update proved to be quite a mess! In the .BIN package you'll find a rat's nest of shell scripts and binaries which have dependencies not available by default on the CentOS 6 live DVD (like procmail and a bunch of older C libraries). I tried fighting my way through all the errors, manually tweaking the code, but finally decided against it. There has to be an easier way!

Thanks to a forum thread at Dell, I learned that there is in fact an easier way. Instead of fighting with these odd Linux packages, let's go back to good ol' trusted DOS!

I learned that booting FreeDOS from a USB stick on the R410 is problematic. In my case: it's a no-go. So I took FreeDOS 1.3 and burned their Live CD to a literal CD-ROM. Stuck that in the R410's DVD drive and it boots like a charm!

While FreeDOS does not have USB drivers, there is some magic in the underlying boot loaders that will mount any USB drives attached to the system during boot-time. The USB stick I put in the back USB port was made available to me as C:, while the booted CD-ROM was R:.

What do you put on that USB stick? The contents of the PER410*.exe files available from Dell's support site. Each of these is yet another self-extracting ZIP file, containing all the needed tools for the update.

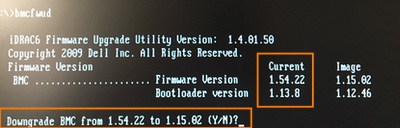

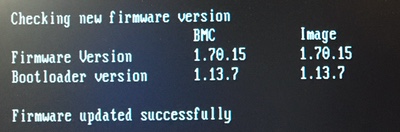

After removing the two iDRAC modules (read below) and getting the correct update (see below also), I followed the instructions from Dell's support team in that forum thread, extracted the ZIP file onto the USB stick, booted FreeDOS and ran "bmcfwud". The system needed a reboot and a second run of bmcfwud. And presto! My BMC was updated!

BMC stands for Baseboard Management Controller. It's Dell's integrated IPMI-based management system, which is literally integrated into the motherboard of the 11G systems. It'll let you do some basic remote management. The most important reason for homelab admins to consider updating BMC is to get version >=1.33 which greatly decreases fan noise.

BMC was superceded by iDRAC (integrated Dell Remote Access Controller), which offers cool features like SSH access, a web GUI and much, much more features! Here's a short discussion about it.

For all intents and purposes iDRAC replaces BMC. If you have an iDRAC installed, the BMC will not be active on your 11G system. The fan noise issues on the R410 should be fixed with any recent version of the iDRAC firmware.

So why did I want to update the BMC firmware?

Because I'm stubborn. =)

Initially, running the updater failed because it said my BMC was at version 2.92. Well, that's impossible!

Turns out, that's because I still had the iDRAC in there! :D I removed both iDRAC daughter cards and tried again.

A downgrade? While I grabbed the most recent BMC update from Dell's site?! No thank you !

So, funny story: Dell's support site for the R410 states that the most recent available version for BMC's firmware is 1.15. The poweredgec.com site for 11G also confirms this. But if you manually search for them, you'll find newer versions:

Apparently my BMC already had 1.54, so it already had the fan updates from 1.33. Guess all the noise that thing was making was "normal". Anyway, grabbing the 1.70 update and running bmcfwud finally had the desired end result.

kilala.nl tags: sysadmin, work,

View or add comments (curr. 0)

2019-04-05 06:17:00

This morning an interesting question passed through the SANS Advisory Board mailing list:

"Looking for anyone that has done a cost benefit analysis, or just general consideration, of using a Public CA vs. a Private CA for a PKI deployment. Some vendors are becoming very competitive in this space and the arguments are all one-sided. So aside from cost, I’m looking for potential pitfalls using a public CA might create down the road."

My reply:

My previous assignment started out with building a PKI from scratch. I’d never done this before, so the customer took a gamble on me. I’m very grateful that they did, I learned a huge amount of cool stuff and the final setup turned out pretty nicely! I’ll try and tackle this in four categories.

UPSIDES OF PRIVATE PKI

UPSIDES OF PUBLIC PKI

DOWNSIDES OF PRIVATE PKI

DOWNSIDES OF PUBLIC PKI

If your infrastructure needs to be cut off from the outside world, you will HAVE to run your own, private PKI.

I’ve recently presented on the basics of PKI and on building your own PKI, be it for fun, for testing or production use. The most important take-away was: “If you’re going to do it, do it right!”. You do NOT simply fire up a Linux box with OpenSSL, or a single instance Windows Server box with ADCS and that’s that. If you’re going to do it right, you will define policy documents, processes and work instructions that are to be strictly followed, you’ll consider HA and DR and you’ll include HSMs (Hardware Security Modules). The latter are awesomely cool tech to work with, but they can get pricy depending on your wants and needs.

Remember: PKI might be cool tech, but the point of it all is TRUST. And if trust is damaged, your whole infrastructure can go tits-up.

kilala.nl tags: pki, work, sysadmin,

View or add comments (curr. 0)

2019-03-12 20:02:00

The past week I've gotten my start in an Ansible course and a book, starting my work towards RedHat's EX407 Ansible exam. I've been wanting to get a start in Ansible, after learning a lot about Puppet a few years back. And if I manage to pass EX407 it'll renew my previous RedHat certs, which is great.

Anywho! The online course has its own lab environment, but I'm also applying all that I learn to my homelab. So far Ansible managed the NTP settings, local breakglass accounts and some systems hardening. Next stop was to ensure that my internal PKI's certificates get added to the trust stores of my Linux hosts. I've done this before on RedHat derivatives (CentOS, Fedora, etc), but hadn't done the trick on Debian-alikes (Ubuntu, Kali, etc) yet.

First stop, this great blog post by Confirm IT Solutions. They've provided an example Ansible playbook for doing exactly what I want to do. :) I've taken their example and I'm now refactoring it into an Ansible role, which will also work for Kali (which unfortunately has unwieldy ansible_os_family and ansible_distribution values).

To summarize the differences between the two distributions:

RedHat expects:

Debian expects:

kilala.nl tags: work, sysadmin,

View or add comments (curr. 2)

2019-02-25 09:57:00

Continuing with security improvements all site and domain admins can apply: everybody that runs their own domain can and should implement SPF: Sender Policy Framework.

What it does, is explicitly tell the whole Internet which email servers are allowed to send email on behalf of your domain(s). Like many similar advertisements, this is achieved through DNS records. You can handcraft one, but if things get a bit too complicated, you can also use the handy-dandy SPF Wizard.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2019-02-07 18:11:00

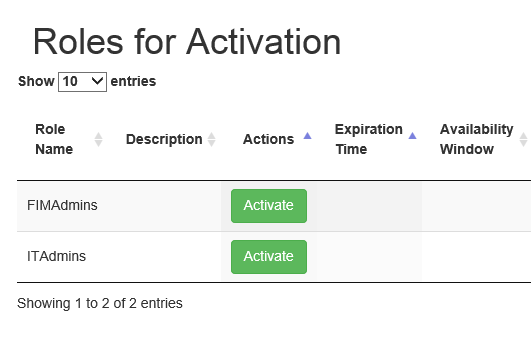

If the screenshot above looks familiar to you, you need to pay attention. (Image source)

Microsoft's MIM is a widely used identity management platform for corporate environments. Many MIM tutorials, guides and books (including Microsoft's own site) [1][2][3] refer to Microsoft's sample PAM portal [4] to demonstrate how a request handling frontend could work. In this context, PAM stands for: "Privileged Access Management". While some of these sources make it clear that this is merely a demonstration, I can say without a doubt that there are companies that put this sample PAM portal to use in production environments. [5][6][7][8] Let me restate: there are enterprises putting the sample PAM Portal into production!

In short, the PAM portal allows an authenticated user to activate MIM "roles", which in turn will add groups to their account on-demand. By activating a role, MIM interacts with Active Directory and adds the groups configured for the role, to the end user's account. Unfortunately the sample PAM portal is not suited for production and I suspect that it has had little scrutiny with regards to the OWASP Top 10 vulnerabilities.

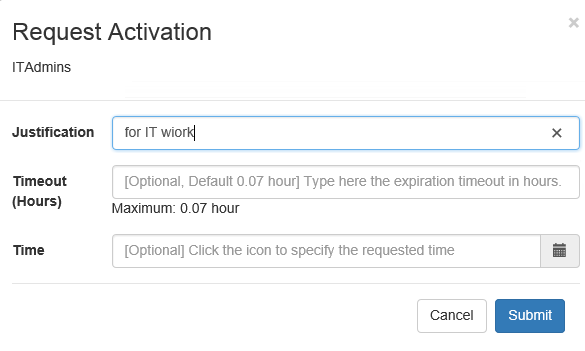

The cross-site scripting vulnerability that I ran into concerns the "Justification" field shown in the screenshot below. (Image source)

When activating a role, the end-user is presented with a popup asking for details of the request. The field labeled "justification" allows free entry of any text. It is not sanitized and the length appears to be limited to 400 characters. Through testing I have proven the ability to enter values such as:

<script>alert("Hello there, this is a popup.");</script>

<script>alert(document.cookie);</script>

These Javascript snippets are entered into the backend database without sanitation or conversion. The aforementioned 400 characters limit is easily enough for instructions to download and run shell code.

If we look at "Roles.js" on the Github page we see the following, where the form contents are loaded directly into a variable, without sanitation.

$("form#createRequestForm").submit(function(e){

var roleId = $("#roleIdInput").attr("value");

var justification = $("#justificationInput").val();

... ...

$.when(createPamRequest(justification,roleId,reqTTL,reqTime))

... ...

The "createPamRequest" function is defined in "pamRestApi.js", where yet again the input is not sanitized.

function createPamRequest(reqJustification, reqRoleId, reqTTL, reqTime) {

var requestJson = { Justification: reqJustification, RoleId: reqRoleId, RequestedTTL: reqTTL, RequestedTime : reqTime };

return $.ajax({

url: BuildPamRestApiUrl('pamrequests'),

type: 'POST',

data: requestJson,

xhrFields: {

withCredentials: true

}

})

}

The XSS comes into play when browsing to the "Requests" (History) or the "Approvals" tabs of the sample PAM portal. These pages respectively show the user's own history of (de)activation and other user's requests that are pending approval. After entering the code snippets above, visiting the "History" tab results in two popups: one with the short message and another one blank, as there are no cookie contents.

One viable attack vector would be:

The aforementioned sample PAM portal is a collection of Javascript bundles and functions, thrown together with some CSS and HTML. It has no database of its own, nor any data of its own. All of the contents are gathered from the MIM (Microsoft Identity Manager) database, through the MIM JSON REST API.

Based on the previously discussed vulnerability we can conclude that the MIM JSON REST API does not perform input validation or sanitation! At the very least not on the "Justification" field. The Javascript code I entered into the form was passed directly through the JSON API into the MIM database and was later pulled back from it (for the "Requests" and "Approvals" pages).

I have also verified this by delving directly into the database using SQL Management Studio. The relevant field in the database literally contains the user's input. There is no transcoding, no sanitation, etc.

I reported these issues to Microsoft through their responsible disclosure program in December, right before the holidays. After investigating the matter internally, they have provided a fix to the sample PAM Portal. The January 2019 revision of the code is no longer suceptible to an XSS attack.

Microsoft's resolution consists of hardening the coding of the PAM Portal itself: no data retrieve from the database will be interpreted as HTML. Instead it is hard-interpreted as plain text. Refer to the Github pull request chat for details.

They have NOT adjusted the MIM PAM REST API, which will continue to accept and store any user input offered. This means that accessing the API through Invoke-WebRequest is still susceptible to an XSS attack, because I-WR will happily run any Javascript code found. I showed this with examples earlier this week.

Anyone using the Microsoft MIM PAM Portal in their network should upgrade to the latest version of the project as soon as possible.

Also, if you are using the Powershell command Invoke-WebRequest to access the MIM PAM REST API, you should always adding the flag -UseBasicParsing.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2019-02-04 13:45:00

Well! It's been an interesting month, between work and a few vulnerabilities that I'd reported to a vendor. And now there's this little surprise!

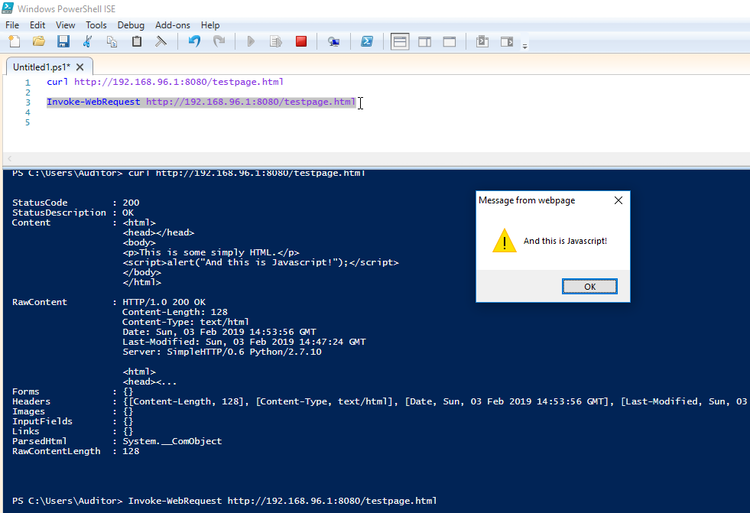

Imagine that you're using Powershell's Invoke-WebRequest command in your management scripts, to access an API or to pull in some data. It happens, right? Nothing out of the ordinary! While I was pentesting one particular API, I decided to poke at it manually using Invoke-WebRequest, only to be met with a surprising bonus! The Javascript code I'd sent to the API for an XSS-attack was returned as part of the reply by the API. Lo and behold! My I-WR ran the Javascript locally!

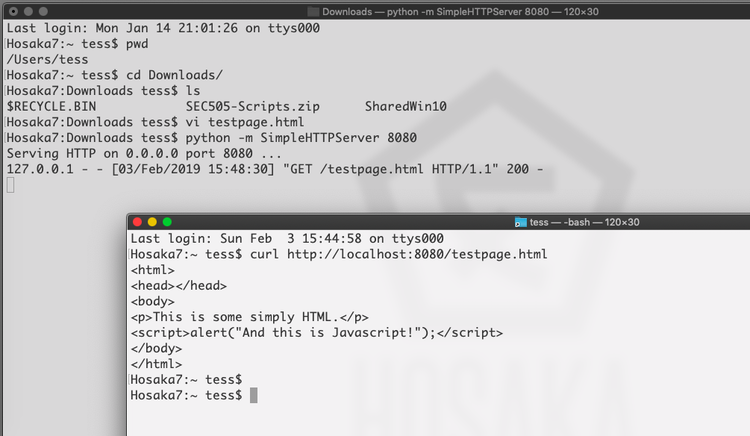

Screenshot 1 shows the server-side of my proof-of-concept: Python running a SimpleHTTPServer, serving up "testpage.html" from my laptop's MacOS.

In the image above you'll also see the Unix/Linux/MacOS version of curl, which simply pulls down the whole HTML file without parsing it.

Now, the image below shows what happens when you pull in the same page through Invoke-WebRequest in Powershell:

Fun times!

This means that every time you run a curl or Invoke-WebRequest on Windows, you'd better be darn sure about the pages you're calling! This Javascript alert is benign enough, but we all know the dangers of cross-site scripting attacks or just plain malevolent Javascript! Annoyingly, I have not yet found a way to disable JS-parsing in these commands. Looks like it can't be done.

What's worse: these commands are often included in scripts that are run using service accounts or by accounts with administrative privileges! That runs afoul of Critical Security Control #5: controlled use of administrative privileges! (More info here @Rapid7). Basically, you're running a whole web browser in your scripting and tooling!

So be careful out there folks! Think before you run scripts! Check before you call to URLs you're not familiar with! Trust, but verify!

EDIT: I've sent an email to Microsoft's security team, see what they think about all this. I mean, I'm sure it's a well-known and documented fact, but personally I'd feel a lot safer if I had the option to disable scripting (like JS) in Invoke-WebRequest.

EDIT: It looks like the only way to disable Javascript in Invoke-WebRequest, is to disable it in the Internet Explorer browser. Guess that makes sense, because doesn't I-WR use the IE engines?

After discussing the matter with the security team of Microsoft, I have come to understand that I have misunderstood the documentation provided for Invoke-WebRequest. It turns out that you can easily protect yourself from this particular problem by always adding the flag -UseBasicParsing.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 3)

2019-01-17 19:27:00

EDIT:

Oooff... Linking to my homebrew website on a SANS Twitter-feed; how's that for #LivingDangerously? For the love of cookies, please don't hack me. I like my Dreamhost account... ^_^

About a month ago I explained a bit about the amazing chance I'd been offered by SANS, when they accepted me into their Work/Study Program. My week with SANS is coming to its end, so I thought I'd share a few of my experiences. Quite a few others have shared their stories in the past (linked below), but this is mine. :)

As was expected the days are pretty long and the work is hard. But for me they haven't been unbearably long, nor impossibly hard. Overall the atmosphere at SANS Amsterdam has been pretty laidback!

Before coming to town, our event managers had set up a WhatsApp group so we could stay in close contact before and during the event. This turned out to be very helpful, as we could keep messaging eachother during class through the magic of WA's webapp. You can count on silly memes flying through that chat, but it's been mostly useful :)

Sunday was spent moving and unpacking 250 boxes of books into the respective eight rooms. There's a rather specific layout that SANS want their student-tables to be in (books stacked exactly so-and-so, pen here w/ yellow cap there, logo pointing here and so on. As another Facilitator said: "Clearly someone has put a lot of thought into this...". I've found that, after putting the boxes on the ground in a circle around me, I got into the rhythm of making the stacks real quickly. Setting up the mics and speakers and rigging powerlines was a nice flashback to my days with AnimeCon.

Choosing not to stay at an Amsterdam hotel has been both a boon and a burden. Traveling home allows me to see my family every night and saves me quite some dough. It'll also take my head out of SANS a little bit, so I can unwind. On the other hand I'm missing out on the nightly sessions and NetWars.

Working with the SEC566 trainer Russell has been nothing but a pleasure. As he himself said, he's "pretty low maintenance". He doesn't need me to go around town to grab things for him, just make sure his water bottles are always available and that the room's ready for use. So instead, most of my time went to the rest of the party: cleaning the room, prepping for the next day and making sure that the other students are "in a good place". A few people were having issues with their lab VMs, some folks had questions about practical SANS matters and others were simply looking for a nice chat.

Speaking of: I can honestly say that it's been a long while since I've spent time with such a friendly group of people! I know that some folks on the web have been complaining that the InfoSec industry has been toxifying in recent years, but at least we didn't notice anything'bout that at SANS Amsterdam. I've met quite a few fun and interesting people here!

In short: I am very grateful for the opportunity SANS have given me and I would recommend applying for the role to anyone in a heartbeat!

EDIT: Because some people have asked, here's my "normal" workday as Facilitator, traveling from home in Almere to Amsterdam.

During the lab exercises I usually work ahead, so I'm one chapter ahead of the class. That will allow me to know upfront what kind of problems they may run into and may need help with. As others on TechExams.net have pointed out, Facilitators are NOT the same as TAs (teaching assistants). So on the one hand I am constantly a bit anxious about whether or not I'm butting into the trainer's ground. On the other hand I've had good responses from both classmates and the trainer, so I reckon I didn't tick anyone off... At least not this time :D

I can imagine that it'd be entirely different in a tech-oriented class. I'd have to pipe down a lot more than I did this week.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2019-01-11 21:06:00

So far I've built a few VMs in my homelab, to house my AD DS and AD CS services (the Directory Services and PKI respectively). There's also a few CentOS 7 boxen spinning up to house Graylog and ElasticSearch.

Up until this point, all these VMs were getting their IP addresses from our home's internal network infrastructure. Of course it's always a bad idea to mix production and dev/test environments, so I've set up segregation between the two. The easiest way to achieve this will also help me achieve one of my goals for 2019: get acquainted with the pfSense platform.

pfSense is a BSD-based, open source platform for routers/firewalls that can be run both as a VM or on minimalistic ARM-hardware. In my case, I've done a setup comparable to Garrett Mills' example on Medium.com. In short:

BAM! The dev/test VMs are now tucked away into their pocket universe, invisible to our home network.

EDIT:

The pfSense folks also provide nice documentation on setting up their product inside VMWare ESX.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2019-01-10 21:47:00

For the past X years, I've ran my homelab on my Macbook Air. I've always been impressed with how much you can get away with, on this light portable, sporting an i5 and 8GB of RAM. It'll run two Win2012 VMs and a number of small Linux hosts, aside the MacOS host.

But there's the urge for more! I've been playing with so much cool stuff over the years! I wan't to emulate a whole corporate environment for my studies and tests!

Like the OpenSOC folks, I've been eyeing those Skull Canyon Intel NUCs. They're so sexy! Tiny footprint, combined with great performance! But they're also quite expensive and they don't have proper storage on board. My colleague Martin put me on the trail of local refurbishers and last week I hit gold.

Well... Fool's Gold, maybe. But still! It was shiny, it looked decent and the price was okay. I bought a refurbished Dell R410.

Quick specs:

Yes, it's pretty old already (generation 11 Dell hardware). Yes, it's power hungry. Yes, it's loud. But it was affordable and it's giving me a chance to work with enterprise hardware again, after being out of the server rooms for a long while.

After receiving the pizza box and inspecting it for damage, the first order of business was to setup its iDRAC6. iDRAC is Dell's solution to what vendors like HP call ILO: a tiny bit of embedded hardware that can be used across the network to manage the whole server's hardware.

The iDRAC configuration was tackled swiftly and the web interface was available immediately. It took a bit of digging in Dell's documentation, but I learned how to flash the iDRAC6 firmware so I could upgrade it to the latest (2.95) version. It really was as easy as downloading the "monolithic" iDRAC firmware, extracting the .D6 file and uploading it through the iDRAC web interface. Actually finding the upload/update button in the interface took more effort :p

Getting the iDRAC6 remote console working took a little more research. For version 6 of the hardware, the remote console relies upon a Java application, which you can call by clicking a button in the web interface. What this does is download a JNLP configuration file, which in turn downloads the actual JAR file for execution. This is a process that doesn't work reliably on modern MacOS due to all the restrictions put on Java. The good news is that Github user Nicola ("XBB") provides instructions on how to reliably and quickly start the remote console for any iDRAC6 on MacOS, Linux and Windows.

Last night I installed VMWare ESXi 6.5, which I've been told is the highest version that'll work on this box. No worries, it's good stuff! The installation worked well, installing onto a SanDisk Cruzer Fit mini USB-drive that's stuck into the front panel. I still have a lot of learning to do with VMWare :)

In the mean time, there's two VMs building and updating (Win2012 and CentOS7), so I can use them as the basis for my "corporate" environment.

My plans for the near future:

I'm having so much fun! :D

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2018-11-28 16:49:00

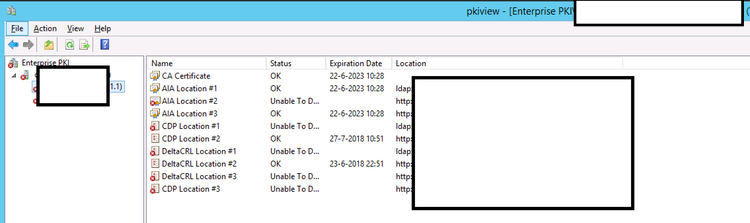

Following up on my previous post on querying ADCS with certutil, I spent an hour digging around ADCS some more with a colleague. We were looking for ways to make our lives easier when performing certificate life cycle management, i.e. figuring out which certs need replacing soon.

Want to find all certs that expire before 0800 on January first 2022?

certutil –view –restrict “NotAfter<1/1/2022 08:00”

However, this also shows the revoked certificates, so lets focus on those that have the status "issued". Here's a list of the most interesting disposition values.

certutil –view –restrict “NotAfter<1/1/2022 08:00,Disposition=0x14”

Now that'll give us the full dump of those certs, so let's focus on just getting the relevant request IDs.

certutil –view –restrict “NotAfter<1/1/2022 08:00,Disposition=0x14” –out “RequestId”

Mind you, many certs can be setup to auto-enroll, which means we can automatically renew them through the ADCS GUI by going into Template Management and telling AD to tweak all currently registered holders, making them re-enroll. That's a neat trick!

Of course this leaves us with a wad of certificates that need manual replacement. It's easier to handle these on a per-template basis. To filter on these, we'll need to get the template ID. You can do this through the ADCS GUI, or you can query a known cert and output it's cert template ID.

certutil –view –restrict “requestid=3162” –out certificatetemplate

So our query now becomes:

certutil –view –restrict “NotAfter<1/1/2022 08:00,Disposition=0x14,certificatetemplate=1.3.6.1.4.1.311.21.8.7200461.8477407.14696588202437.5899189.95.14580585.6404328” –out “RequestId”

Sure, the output isn't easily used in a script unless you add some output parsing (there are white lines and all manner of kruft around the request IDs), but you get the picture. This will at least help you get a quick feeling for the amount of work you're up against.

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2018-11-22 19:35:00

I've been studying MongoDB recently, through the excellent Mongo University. I can heartily recommend their online courses! While not entirely self-paced, they allow you enough flexibility to finish each course within a certain timeframe. They combined video lectures with (ungraded) quizes, and graded labs and an exam. Good stuff!

I'm currently taking M310, the MongoDB Security course. One of the subjects covered is Kerberos authentication with MongoDB. In their lectures they show off a use-case with a Linux KDC, but I was more interested in copying the results with my Active Directory server. It took a little puzzling, a few good sources (linked below) and three hours of mucking with the final troubleshooting. But it works very nicely!

On the Active Directory side:

We'll have to make a normal user / service account first. I'll call it svc-mongo. This can easily be done in many ways; I used ADUC (AD Users and Computers).

Once svc-mongo exists, we'll connect it to a new Kerberos SPN: a Service Principal Name. This is how MongoDB will identify itself to Kerberos. We'll make the SPN, link it to svc-mongo and make the associated keytab (an authentication file, consider it the user's password) all in one blow:

ktpass /out m310.keytab /princ mongodb/database.m310.mongodb.university@CORP.BROEHAHA.NL /mapuser svc-mongo /crypto AES256-SHA1 /ptype KRB5_NT_PRINCIPAL /pass Password2

This creates the m310.keytab file and maps the SPN "mongodb/database.m310.mongodb.university" to the svc-mongo account. The SPN is written in the format "service/fullhostname/domain". The password for the user is also changed and some settings are set pertaining to the used cryptography and Kerberos structures.

You can verify the SPN's existence with the setspn -Q command. For example:

PS C:usersThomasDocuments> setspn -Q mongodb/database.m310.mongodb.university

Checking domain DC=corp,DC=broehaha,DC=nl

CN=svc-mongo,CN=Users,DC=corp,DC=broehaha,DC=nl

mongodb/database.m310.mongodb.universityExisting SPN found!

The m310.keytab file is then copied to the MongoDB server (database.m310.mongodb.university). In my case I use SCP, because I run Mongo on Linux.

On the Linux side:

The m310.keytab file is placed into /etc/, with permissions set to 640 and ownership root:mongod. In order to use the keytab we can set an environment variable: KRB5_KTNAME="/etc/m310.keytab". This can be done in the profile of the user running MongoDB, or on RHEL-derivates in a sysconfig file.

We need to setup /etc/krb5.conf with the bare minimum, so the Kerberos client can find the domain:

[libdefaults]

default_realm = CORP.BROEHAHA.NL[realms]

CORP.BROEHAHA.NL = {

kdc = corp.broehaha.nl

admin_server = corp.broehaha.nl

}[domain_realm]

.corp.broehaha.nl = CORP.BROEHAHA.NL

corp.broehaha.nl = CORP.BROEHAHA.NL[logging]

default = FILE:/var/log/krb5.log

Speaking of finding the domain, there are a few crucial things that need to be setup correctly!

With that out of the way, we can start making sure that MongoDB knows about my personal user account. If the Mongo database does not yet have any user accounts setup, then we'll need to use the "localhost bypass" so we can setup a root user first. Once there is an administrative user, run MongoD in normal authorization-enabled mode. For example, again the barest of bare minimums:

mongod --auth --bind_ip database.m310.mongodb.university --dbpath /data/db

You can then connect as the administrative user so you can setup the Kerberos account(s):

mongo --host database.m310.mongodb.university:27017 --authenticationDatabase admin --user root --password

MongoDB> use $external

MongoDB> db.createUser({user:"tess@CORP.BROEHAHA.NL", roles:[{role:"root",database:"admin"}]})

And with that out of the way, now that we can actually use Kerberos-auth. We'll restart MongoD with Kerberos enabled, at the same time disabling the standard Mongo password authentication and thus lock out the root user we used above.

mongod --auth --bind_ip database.m310.mongodb.university --authenticationMechanisms=GSSAPI --dbpath /data/db

We can then request a Kerberos ticket for my own account, start a Mongo shell and authenticate inside Mongo as myself:

root@database:~# kinit tess@CORP.BROEHAHA.NL -V

Using default cache: /tmp/krb5cc_0

Using principal: tess@CORP.BROEHAHA.NL

Password for tess@CORP.BROEHAHA.NL:

Authenticated to Kerberos v5root@database:~# mongo --host database.m310.mongodb.university:27017

MongoDB shell version: 3.2.21

connecting to: database.m310.mongodb.university:27017/testMongoDB Enterprise > use $external

switched to db $externalMongoDB Enterprise > db.auth({mechanism:"GSSAPI", user:"tess@CORP.BROEHAHA.NL"})

1

HUZZAH! It worked!

Oh right!.. What was the thing that took me hours of troubleshooting? Initially I ran MongoD without the --bind_ip option to tie it to the external IP address and hostname. I was running it on localhost. :( And thus the MongoD process identified itself to the KDC as mongodb/localhost. It never showed that in any logging, so that's why I missed it. I had assumed that simply passing the keytab file was enough to authenticate.

Sources:

kilala.nl tags: work, sysadmin,

View or add comments (curr. 0)

2018-11-01 18:44:00

I think Microsoft's ADCS is quite a nice platform to work with, as far as PKI systems go. I've heard people say that it's one of the nicest out there, but given its spartan interface that kind of makes me worry for the competitors! One of the things I've fought with, was querying the database backend, to find certificates matching specific details. It took me a lot of Googling and messing around to come up with the following examples.

To get the details of a specific request:

certutil -view -restrict "requestid=381"

To show all certificate requests submitted by myself:

certutil -view -restrict "requestername=domain\t.sluijter"

To show all certificates that I requested, displaying the serial numbers, the requestor's name and the CN on the certificate. It'll even show some statistics at the bottom:

certutil -view -restrict "requestername=domain\t.sluijter" -out "serialnumber,requestername,commonname"

Show all certificates provided to TESTBOX001. The query language is so unwieldy that you'll have to ask for "hosts >testbox001 and <testbox002".

certutil -view -restrict "commonname>testbox001,commonname<testbox002" -out "serialnumber,requestername,commonname"